AI Regulation Is Creating New Moats

March 11, 2026 by Harshit Gupta

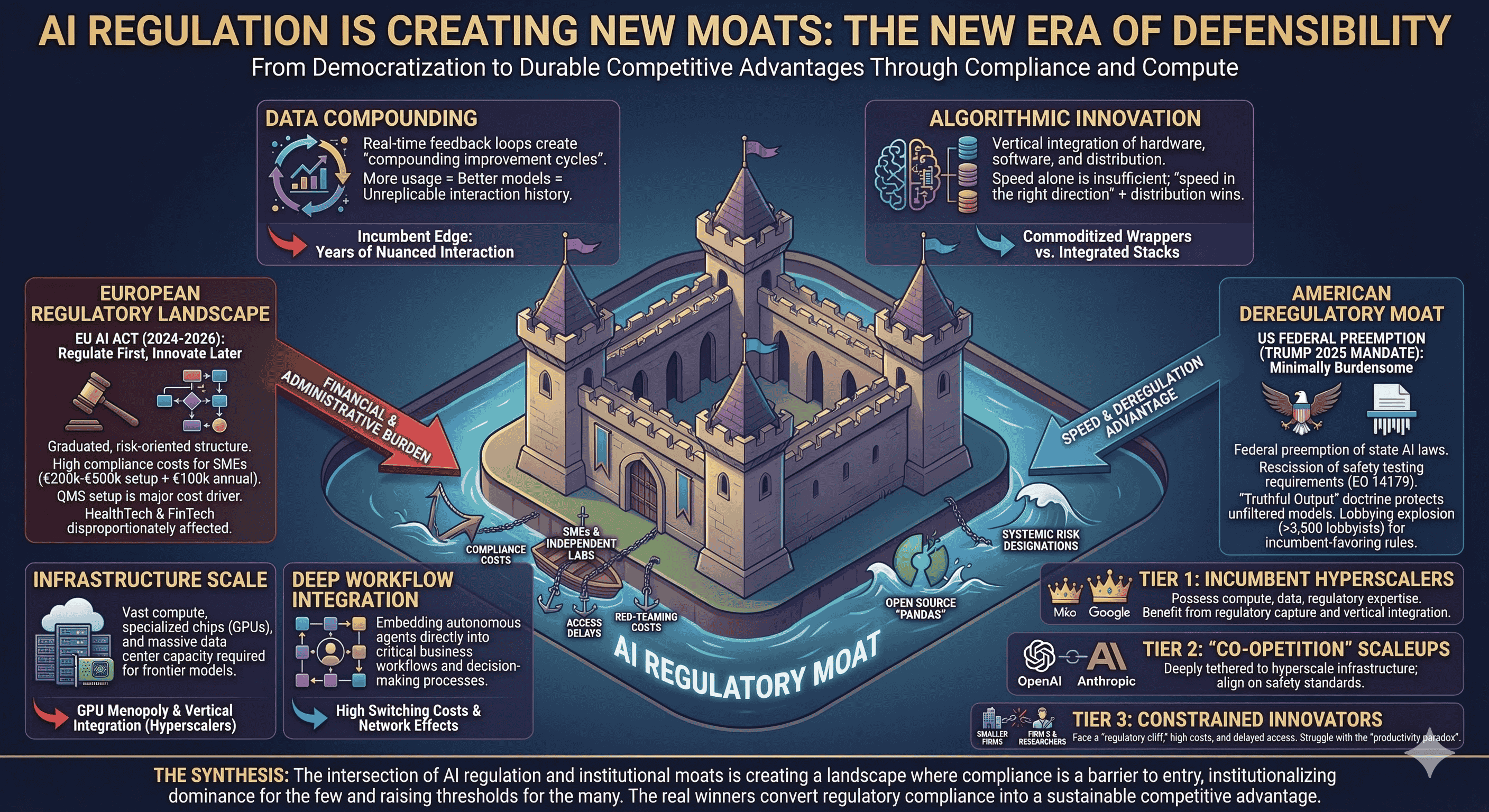

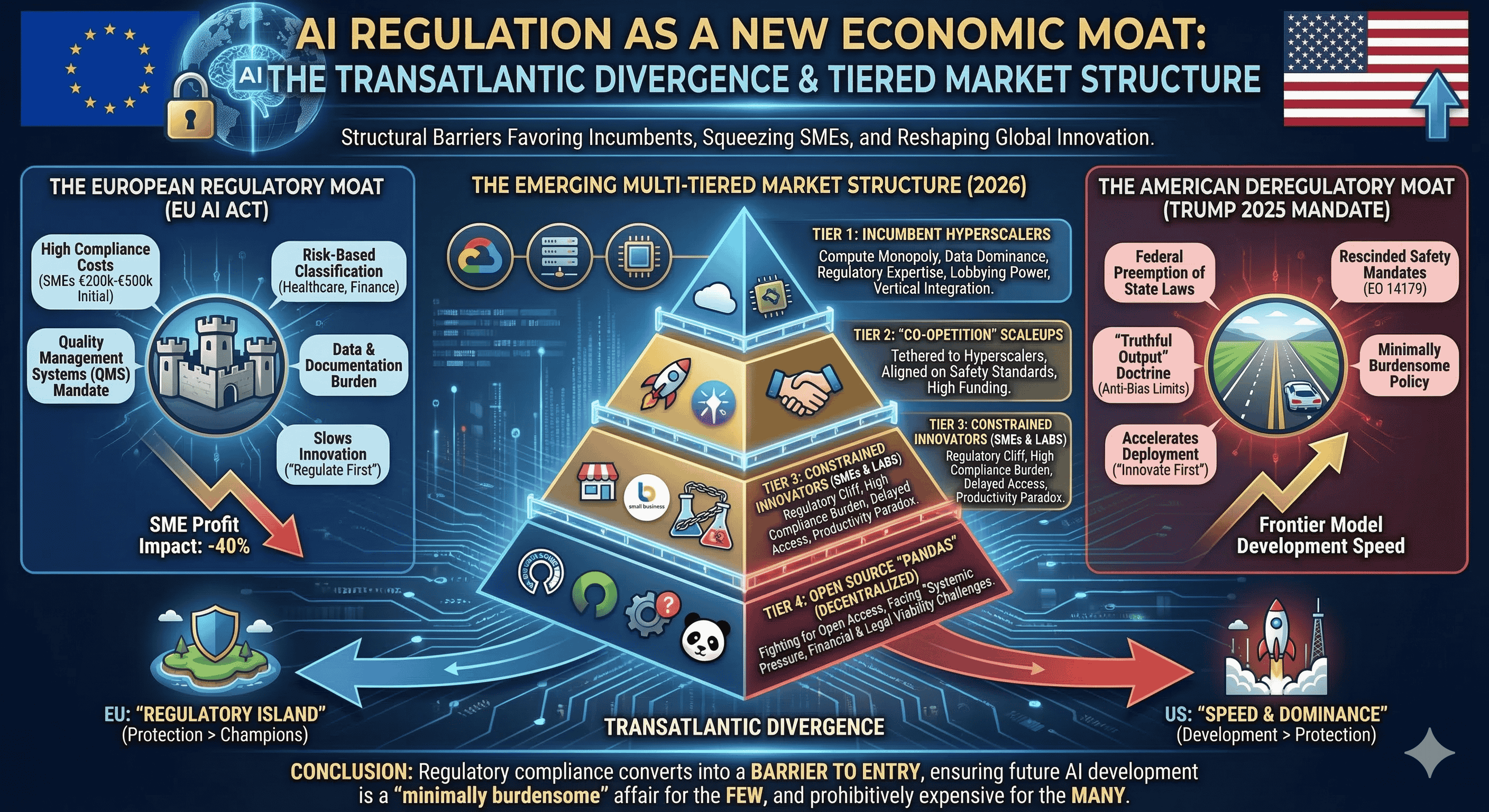

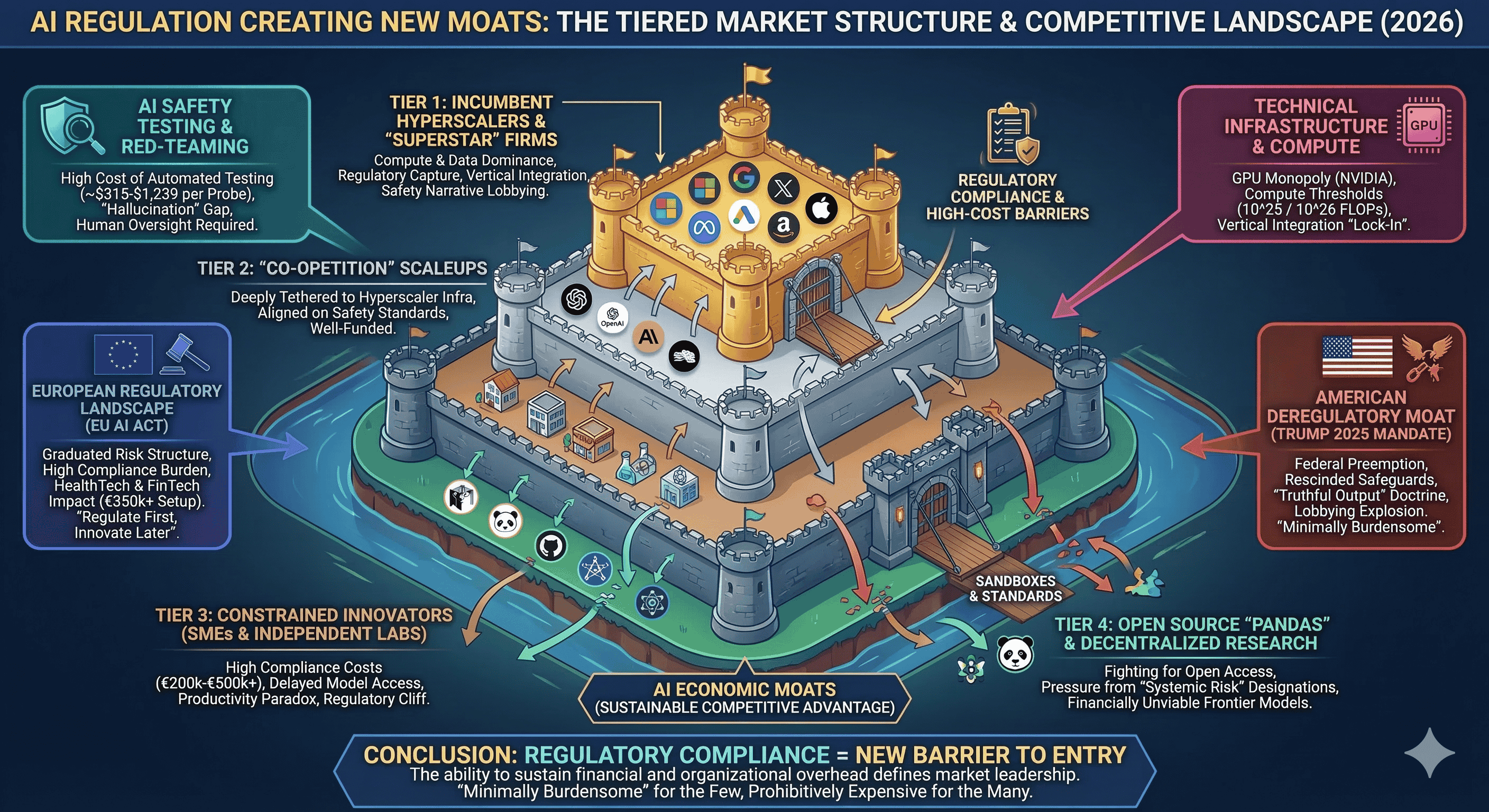

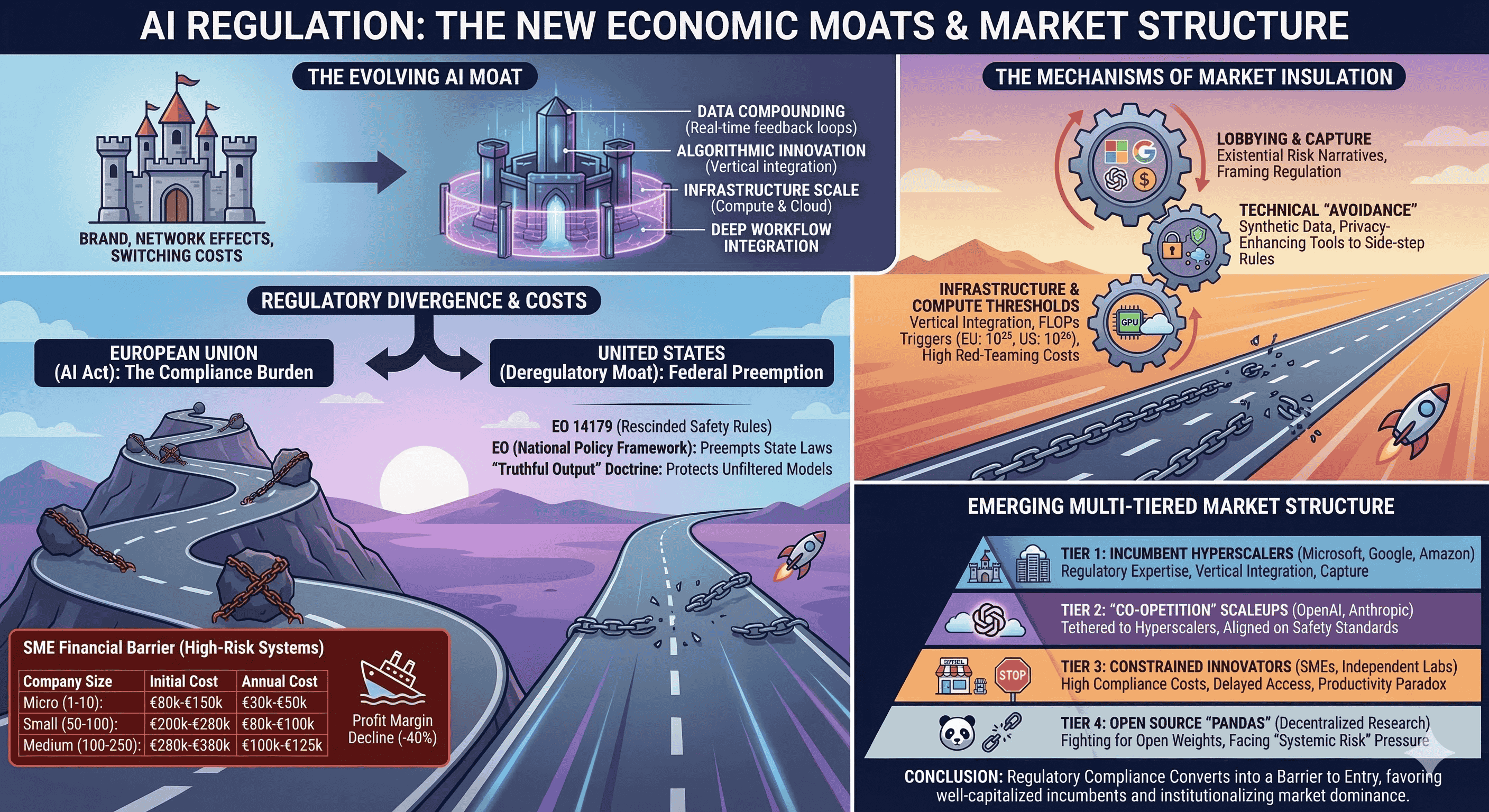

The competitive landscape of the global technology sector is undergoing a structural transformation as artificial intelligence shifts from a phase of rapid differentiation to one of durable defensibility. While the initial wave of AI development was characterized by the democratization of access to large language models, the strategic focus has transitioned toward the construction of "economic moats"—sustainable competitive advantages that protect a firm’s market position and pricing power. Traditionally, these barriers were built through brand recognition, network effects, and high switching costs. However, the emergence of agentic AI and the concurrent rise of complex regulatory frameworks have introduced a new paradigm of market insulation. In this new environment, the intersection of technical requirements and legislative mandates is inadvertently creating structural barriers that favor well-capitalized incumbents, thereby institutionalizing the market dominance of a few "superstar" firms while simultaneously raising the threshold for entry for small and medium-sized enterprises (SMEs) and independent research labs.

The Conceptual Evolution of the AI Moat

The concept of the economic moat, popularized by financial strategists to describe defensive business barriers, has been fundamentally redefined by the unique characteristics of agentic AI systems. Unlike traditional software, AI agents exhibit autonomy, memory, goal-orientation, and contextual adaptation. These features enable the creation of defensive barriers through four primary mechanisms: data compounding, algorithmic innovation, infrastructure scale, and deep workflow integration.

The data moat is no longer merely about the volume of information collected; it centers on how that data becomes increasingly valuable through continuous agentic interaction. Traditional organizations collect data and perform periodic analysis, but companies with integrated agentic systems create feedback loops where every interaction refines the model's performance in real-time. This process generates a "compounding improvement cycle" that becomes faster and more sophisticated as it scales, making it nearly impossible for competitors to replicate the months or years of nuanced interaction history that define a market leader’s edge.

Strategic defensibility is further reinforced through "innovation stacks" where hardware, software, and distribution layers are vertically integrated to create a cohesive defensive posture. In the current era, speed alone is insufficient; rather, "speed in the right direction" and the ability to convert early momentum into structural barriers like switching costs and network effects determine long-term success. Consequently, the industry is witnessing a shift where "UI wrappers" and minor feature tweaks are easily commoditized by incumbents with superior distribution channels, leaving true defensibility to those who can navigate the high-dimensionality of AI risk and compliance.

The European Regulatory Landscape: The AI Act and the Institutionalization of Costs

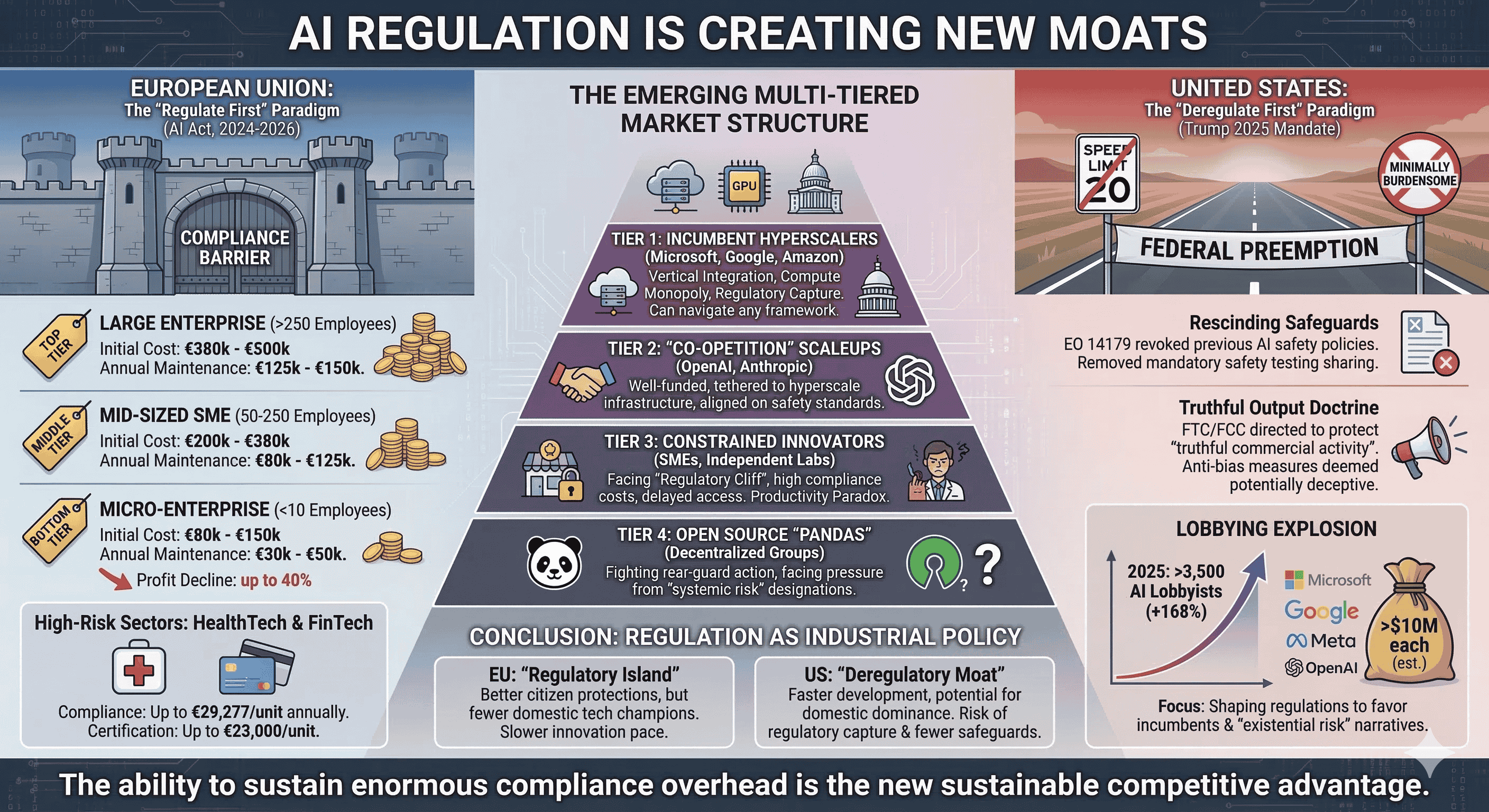

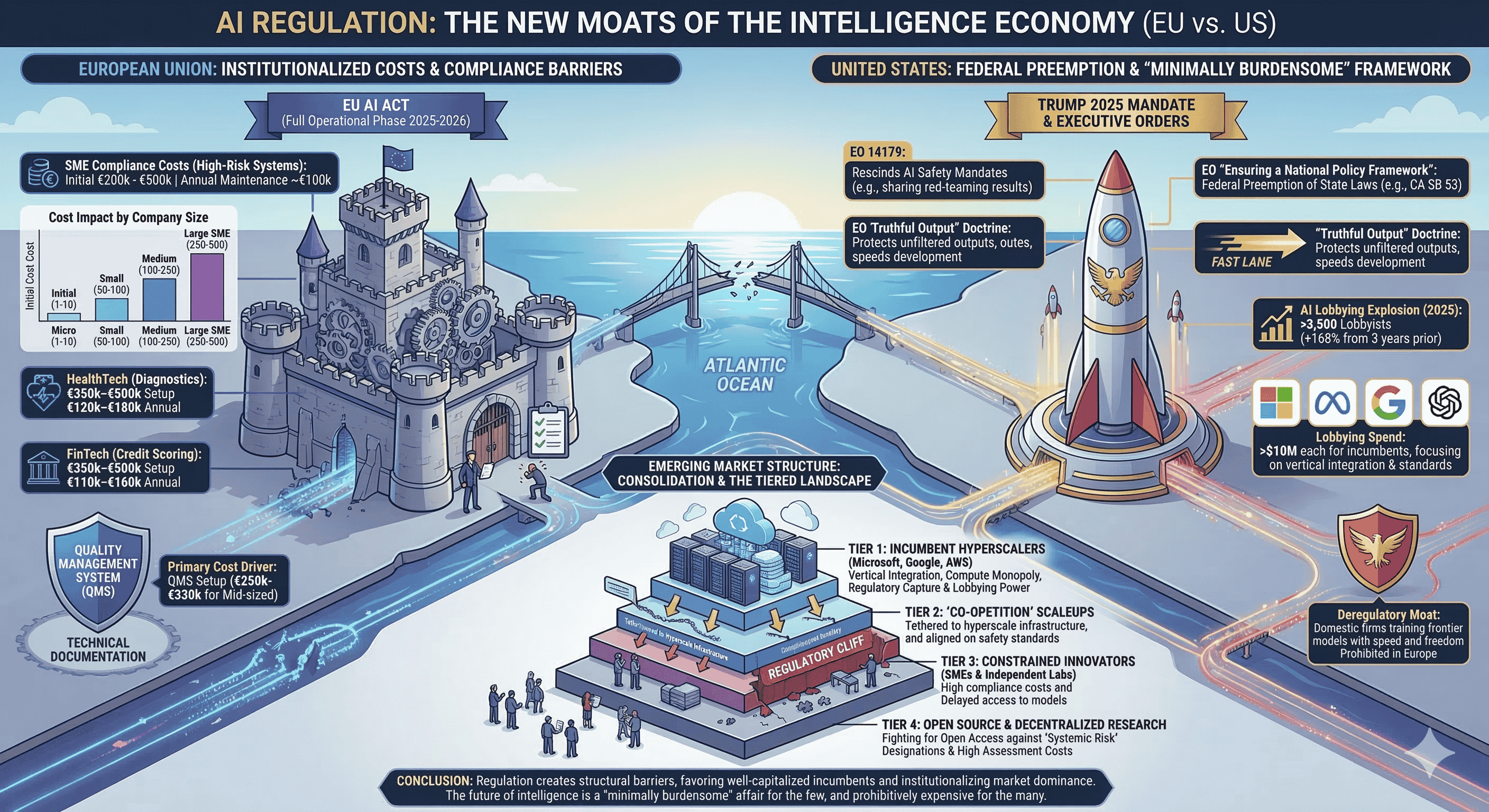

The European Union’s Artificial Intelligence Act (AI Act), adopted in 2024 and entering key operational phases in 2025 and 2026, represents the most comprehensive attempt to regulate the AI lifecycle based on a graduated, risk-oriented structure. While intended to ensure that AI systems are safe, transparent, and accountable, the Act’s stringent requirements for "high-risk" systems—particularly in sensitive sectors like healthcare, finance, and critical infrastructure—impose a financial and administrative burden that scales disproportionately for smaller actors.

Quantifying the Financial Barrier to Entry

For organizations deploying high-risk AI systems, the compliance costs are substantial, often consuming a significant portion of an SME’s profit margin. Initial implementation costs for a company with 50 to 100 employees are estimated between €200,000 and €280,000, with ongoing annual maintenance costs reaching up to €100,000. Larger SMBs with up to 500 employees can face setup costs as high as €500,000. The impact on profitability is stark: an SME with a €10 million turnover could see its profits decline by 40% solely due to the overhead of AI Act compliance.

Company Size (Employees) | Initial Setup Cost | Annual Maintenance Cost |

1 - 10 (Micro-enterprise) | €80,000 – €150,000 | €30,000 – €50,000 |

50 - 100 | €200,000 – €280,000 | €80,000 – €100,000 |

100 - 250 | €280,000 – €380,000 | €100,000 – €125,000 |

250 - 500 | €380,000 – €500,000 | €125,000 – €150,000 |

Note: Figures represent estimated compliance costs for high-risk AI systems.

The breakdown of these expenditures reveals that the primary driver of cost is the establishment of a Quality Management System (QMS), which includes documented policies, risk management frameworks, and development workflow integration. A QMS setup for a mid-sized company (100-250 employees) typically costs between €250,000 and €330,000. Furthermore, technical documentation requirements, post-market monitoring, and legal counsel fees add layers of recurring financial strain that favor incumbents with existing regulatory departments.

Sector-Specific Impacts and the HealthTech Challenge

The regulatory burden is particularly acute in industries where AI systems are likely to be classified as high-risk, such as healthtech and fintech. In healthcare, the AI Act classifies many diagnostic and patient triage systems as "high-risk" because they directly impact life and health outcomes. Compliance for a single medical AI unit can reach €29,277 annually, with certification burdens alone costing up to €23,000 per unit. This creates a market concentration where only larger, well-funded organizations can afford to maintain a portfolio of certified products, potentially stifling entrepreneurial innovation in digital health.

Industry Use Case | Typical Setup Cost | Ongoing Annual Cost |

HealthTech (Diagnostics) | €350,000 – €500,000 | €120,000 – €180,000 |

FinTech (Credit Scoring) | €350,000 – €500,000 | €110,000 – €160,000 |

SaaS HR (Screening) | €280,000 – €380,000 | €90,000 – €130,000 |

EdTech (Assessment) | €250,000 – €350,000 | €80,000 – €120,000 |

Note: Use-case specific estimates for high-risk system deployments.

The American Deregulatory Moat: Federal Preemption and the Trump 2025 Mandate

While the European Union has opted for a "regulate first, innovate later" approach, the United States has undergone a dramatic shift toward a "minimally burdensome" national policy framework following the change in administration in early 2025. This deregulatory environment aims to sustain American AI dominance by removing what the administration views as "excessive state regulation" that creates a "patchwork" of 50 discordant regimes.

Rescinding the Predecessor’s Safeguards

On January 23, 2025, Executive Order 14179 (Removing Barriers to American Leadership in Artificial Intelligence) revoked several components of the previous administration’s AI safety policies, specifically Executive Order 14110. This included the rescission of requirements for developers of the most powerful AI systems to share safety testing and red-teaming results with the federal government. The administration argued that such mandates acted as "barriers to innovation" and paralyzed the industry by imposing bureaucratic delays.

The Strategy of Federal Preemption

The December 11, 2025 Executive Order, "Ensuring a National Policy Framework for Artificial Intelligence," established an aggressive strategy of federal preemption. It directed the Attorney General to establish an AI Litigation Task Force with the sole responsibility of challenging state AI laws—such as Colorado’s "algorithmic discrimination" provisions—that are deemed inconsistent with federal policy. The administration views these state laws as unconstitutionally regulating interstate commerce and forcing "ideological bias" into AI models.

To further enforce this national standard, the administration has utilized federal leverage through funding restrictions. States that enact "onerous" AI laws may be found ineligible for significant federal grants, including remaining funds from the $42 billion Broadband Equity Access and Deployment (BEAD) program.

The "Truthful Output" Doctrine as a Competitive Edge

A central tenet of the 2025 US policy is the concept of "truthful outputs". The administration has directed the Federal Trade Commission (FTC) and the Federal Communications Commission (FCC) to identify state requirements that compel AI developers to alter model outputs—such as anti-bias measures—as potentially deceptive acts that interfere with "truthful" commercial activity. By protecting the right of models to generate unfiltered outputs, the US creates a deregulatory moat where domestic firms can train and deploy frontier models with a level of freedom—and speed—that is prohibited in Europe.

Lobbying and the Institutionalization of "AI Safety"

The rapid growth of the AI industry has been mirrored by an explosion in corporate lobbying, which has increasingly focused on shaping the regulatory environment to favor established players. In 2025, over 3,500 lobbyists—accounting for 25% of all federal lobbyists in the US—reported lobbying on AI issues, a 168% increase from three years prior.

Regulatory Capture through "Existential Risk" Narratives

Critics and independent researchers have raised alarms about the potential for "regulatory capture," where dominant firms use their economic power to exert influence over the very rules intended to govern them. One mechanism of this capture is the framing of AI as a potentially "extinction-inducing technology," which justifies highly restrictive licensing regimes that only a few firms can afford to navigate. Prominent industry figures, such as Sam Altman, have lobbied for licensing requirements for frontier models, a position that Andrew Ng and Yann LeCun have criticized as "existential nonsense" designed to kill competition from open-source alternatives and startups.

Corporate Player | 2024 Lobbying Expenditure | Primary Policy Objectives |

Microsoft | >$10 Million | Vertical integration, cloud dominance |

Meta | >$10 Million | Open access, infrastructure, compute |

>$10 Million | Energy sourcing, federal data access | |

OpenAI | $1.76 Million | Licensing, safety alignment standards |

Anthropic | (Not Disclosed) | Responsible scaling, export controls |

Note: Lobbying spend figures are estimates for federal initiatives.

The Use of Technical "Avoidance" Mechanisms

Research indicates that companies are increasingly developing technologies—such as federated learning, synthetic data, and encryption—as mechanisms to place their operations outside the scope of traditional regulatory frameworks. By framing these toolkits as "privacy-enhancing" or "bias-constraining," firms can redirect regulatory attention away from mandatory legal requirements and toward voluntary, self-defined standards. This allows incumbents to "side-step" costly subpoenas or information access requests while appearing compliant with the spirit of the law.

Technical Infrastructure and the Concentration of Compute

The physical infrastructure required for AI development constitutes one of the most formidable moats in the current technological era. Training "frontier" models requires vast amounts of computational power (compute), specialized semiconductor chips (GPUs), and massive data center capacity.

The GPU Monopoly and Vertical Integration

Concentration in AI markets is fueled by economies of scale and vertical integration. Major players such as Microsoft, Google, and Amazon not only develop AI models but also control the cloud infrastructure and the development of the final applications. In 2022, AWS, Microsoft Azure, and Google Cloud together controlled nearly 70% of the global cloud market, creating a "lock-in" dynamic where users struggle to leave dominant ecosystems.

The cost of training frontier models like GPT-4, which required over 25,000 NVIDIA A100 GPUs and millions of dollars in electricity, is a barrier that smaller companies simply cannot overcome without forming "co-opetition" agreements with hyperscalers. These agreements, while necessary for resource access, often increase the dependency of startups on big tech firms, further restricting their strategic maneuverability.

Compute Thresholds as Regulatory Triggers

Regulatory frameworks often use training compute thresholds as a proxy for a model's potential systemic risk. The EU AI Act, for example, sets a presumptive "systemic risk" threshold for models trained with more than 1025 floating-point operations (FLOPs). Similarly, the US SB 53 in California and the now-rescinded federal EO 14110 utilized a 1026 FLOP threshold.

Regulatory Trigger | Threshold Value | Imposed Obligations |

EU AI Act (Systemic Risk) | 1025 FLOPs | Adversarial testing, risk mitigation |

California SB 53 (Frontier) | 1026 FLOPs | Whistleblower protection, safety reports |

Biden EO 14110 (Dual-Use) | 1026 FLOPs | Quarterly notification of activities |

While these thresholds provide objective criteria, they also create a "regulatory cliff" where developers who exceed the threshold face an exponentially higher compliance burden, effectively discouraging mid-sized labs from scaling their models beyond a certain point unless they have the backing of a major incumbent.

The Financial Reality of AI Safety Testing and Red-Teaming

One of the most significant emerging moats is the requirement for rigorous AI safety testing and adversarial "red-teaming". While essential for identifying vulnerabilities and preventing harmful outputs, the cost and complexity of these evaluations create a structural advantage for large labs.

Quantifying the Cost of Automated Testing

Research into automated security testing costs highlights the financial exposure faced by AI developers. Each adversarial probe—which may include prompt processing, tool calls, and multi-step reasoning—consumes thousands of tokens, making comprehensive testing expensive.

Framework / Plugin Set | Probes Required | Estimated Cost (USD) |

OWASP GenAI Red Team | 24,780 | ~$1,239 |

OWASP LLM Top 10 | 12,600 | ~$630 |

ISO 42001 | 12,600 | ~$630 |

EU AI Act | 6,300 | ~$315 |

NIST AI RMF | 7,980 | ~$400 |

Note: Cost estimates based on $10 per 1 million tokens and ~5,000 tokens per probe.

When these tests must be run across multiple models, environments, and iterations, the total budget for AI application security validation can become one of the most expensive stages of development. Large labs with multi-billion-dollar R&D budgets can absorb these costs as part of their "Responsible Scaling" policies, whereas startups often have to choose between thorough testing and financial viability.

The "Hallucination" Gap in Evaluation

Furthermore, the science of AI evaluation is still in its infancy. Automated red-teaming tools often rely on LLMs to evaluate other LLMs, leading to probabilistic and inconsistent results. Research from organizations like GiveWell indicates that while AI can surface concerns, it frequently "hallucinates" specific details, citing non-existent studies or providing unreliable quantitative estimates. This necessitates a high degree of human oversight—at least 75 minutes of human input per report—to identify which critiques have merit, further increasing the labor-intensive nature of safety compliance.

Industry-Specific Case Studies: Healthcare, Finance, and Higher Ed

The regulatory moats are not uniform; they manifest in specific ways across different sectors, often reflecting the unique risks and existing governance structures of those industries.

Healthcare and the Productivity Paradox

AI adoption in healthcare is estimated to lead to savings of $200 billion to $360 billion annually in the US alone by improving clinical operations and administrative efficiency. However, the sector lags others in adoption due to the high stakes of "quality and safety". The classification of medical AI as high-risk under the EU AI Act threatens to slow the pace of technological advancement by diverting funds from R&D to certification. For example, in spine surgery, where there is often significant disagreement among surgeons on treatment approaches, AI could provide a "legitimate clinical decision-making tool" to reduce waste and error. Yet, the complexity of meeting requirements for human oversight and data governance may consolidate AI development into a few "superstar" firms, marginalizing community-based innovation in digital health.

Financial Services and the "Smart Regulator"

The UK Financial Conduct Authority (FCA) has taken a proactive stance, aiming to support "safe and responsible adoption" of AI without introducing extra regulations. Instead, the FCA relies on existing frameworks, such as the Senior Managers and Certification Regime (SM&CR), to ensure accountability. This approach is intended to provide firms with the flexibility to adapt to technological change while balancing consumer protection. In contrast, the EU AI Act’s fintech-specific requirements—such as those for credit scoring—carry setup costs between €350,000 and €500,000, potentially creating a "regulatory barrier" for fintech startups trying to compete with established banks.

Higher Education and Foreign Influence

The Trump administration’s 2026 policies have extended the focus on AI into the realm of higher education and national security. A 2026 interagency partnership between the Department of Education (ED) and the Department of State has intensified the scrutiny of foreign gifts and contracts valued at $250,000 or more. This intensification is driven by concerns over foreign influence in the academic environment and is often linked to the protection of American AI intellectual property. New reporting portals and a potential lowering of the disclosure threshold to $50,000 signal an environment where universities must exercise unprecedented "due diligence," creating a significant administrative burden for research institutions.

Mechanisms for Re-Democratization: Sandboxes and Standards

In response to the growing concern that regulation is stifling innovation, several jurisdictions have introduced "regulatory sandboxes" and voluntary standards to bridge the gap between oversight and experimentation.

The Promise of Regulatory Sandboxes

Regulatory sandboxes are designed to provide a controlled environment where startups and technology firms can test innovative products under close supervision, often with the benefit of simplified procedures or temporary waivers.

EU AI Act Sandboxes: Member states are required to establish at least one national sandbox, with SMEs and startups receiving priority access free of charge.

UK FCA Sandbox: Evidence suggests that firms completing testing in the UK FCA sandbox received 6.6 times higher fintech investment and achieved market authorization 40% faster.

The SANDBOX Act (US): Proposed legislation in the US aims to facilitate regulatory learning to inform evidence-based rulemaking, though critics warn it could encourage "regulatory arbitrage" if not strictly limited in scope.

While sandboxes have attracted new innovators to the market, they also present risks of "government-conferred privilege," where only selected participants benefit from regulatory relief, potentially creating "government-backed monopolies" if the selection process is not transparent.

NIST AI RMF: A Tool for SMEs?

The NIST AI Risk Management Framework (RMF), released in 2023, provides a voluntary, flexible framework for organizations to manage AI risks across the lifecycle. For small businesses, the NIST RMF recommends starting with "mapping" intended AI use cases and stakeholders, focusing on measurable risks, and leveraging existing templates to reduce the burden of building processes from scratch. However, the framework is not a "silver bullet" and requires a culture of risk management that many small teams find difficult to sustain without dedicated personnel.

The Paradox of Open Source and Systemic Risk

The debate over open-source AI is at the heart of the regulatory moat discussion. Meta and a coalition of progressives and libertarians have argued that an open approach is safer and more likely to promote innovation because it allows researchers to "stress test" models and identify vulnerabilities. Conversely, many prominent AI companies and national security experts push for limits on who can access the most powerful models.

The EU AI Act attempts a compromise by exempting open-source models from certain documentation requirements, but only if they do not pose "systemic risks". This creates a "qualified alert" system where models that reach state-of-the-art capability must still comply with rigorous risk mitigation obligations (Article 55), even if they are released for free. For open-source developers, this means that "safety must be assessed before release," a requirement that can be prohibitively expensive for non-corporate research groups.

Synthesis: The Emerging Multi-Tiered Market Structure

The evidence gathered across these diverse jurisdictions and sectors suggests that AI regulation is not merely a set of rules; it is a mechanism of industrial policy that is reshaping the market into a tiered structure.

The Tier of the Incumbent Hyperscalers: These firms possess the compute, the data, and the regulatory expertise to navigate any framework. They benefit from "regulatory capture" and "vertical integration," and they use the safety narrative to lobby for standards that solidify their dominance.

The Tier of the "Co-Opetition" Scaleups: Well-funded startups like OpenAI and Anthropic, though technically competitors, are deeply tethered to hyperscale infrastructure and are increasingly aligned with incumbents on regulatory safety standards.

The Tier of the Constrained Innovators: SMEs and independent labs face a "regulatory cliff". They are hampered by high compliance costs, delayed access to frontier models, and the "productivity paradox" of being forced to spend more on bureaucracy than on research.

The Tier of the Open Source "Pandas": Groups focusing on decentralization and open weights are fighting a rear-guard action to maintain an open research environment, but they face increasing pressure from "systemic risk" designations that could make open-source development of frontier models legally and financially unviable.

The transatlantic divergence between the EU and the US further complicates this picture. The US’s deregulatory moat allows for a faster pace of development, potentially relegating the EU to a "regulatory island" where citizens have better protections but fewer domestic technological champions.

In conclusion, the intersection of AI regulation and institutional moats is creating a landscape where the "intrinsic characteristic that gives the business a durable competitive advantage" is no longer just the product—it is the ability to sustain the enormous financial and organizational overhead of being a "responsible" AI actor. As governance frameworks like the EU AI Act and the US National Policy Framework become fully operational in 2026, the real winners will be those who can convert regulatory compliance into a barrier to entry, ensuring that the future of intelligence remains a "minimally burdensome" affair for the few, and a prohibitively expensive one for the many.

Read More -

1. From Idea to MVP: A Step-by-Step Guide for Solo Founder

🔗 https://findnstart.com/blogs/from-idea-to-mvp-a-step-by-step-guide-for-solo-founder

2. How to Validate Your Startup Idea in 48 Hours for $0

🔗 https://findnstart.com/blogs/how-to-validate-your-startup-idea-in-48-hours-for-0

3. Remote vs. Local: Does Your Co-Founder Need to Live in the Same City?

🔗 https://findnstart.com/blogs/remote-vs-local-does-your-co-founder-need-to-live-in-the-same-city

4. The 2026 Startup Landscape: What Has Fundamentally Changed (and Why Founder Skills Matter More Than Ever)

5. The Most In-Demand Skills for Startup Founders in 2026

🔗 https://findnstart.com/blogs/the-most-in-demand-skills-for-startup-founders-in-2026

6. How to Find a Technical Co-Founder (Without a Six-Figure Salary)

🔗 https://findnstart.com/blogs/how-to-find-a-technical-co-founder-without-a-six-figure-salary

7. 5 Red Flags to Look for When Choosing a Startup Partner

🔗 https://findnstart.com/blogs/5-red-flags-to-look-for-when-choosing-a-startup-partner

8. How to Pitch Your Idea to Potential Co-Founders

🔗 https://findnstart.com/blogs/how-to-pitch-your-idea-to-potential-co-founders

9. How to Build a Portfolio that Attracts High-Growth Startup Founders

🔗 https://findnstart.com/blogs/how-to-build-a-portfolio-that-attracts-high-growth-startup-founders

10. Equity vs. Salary: How to Split Ownership with Your First Teammate

🔗 https://findnstart.com/blogs/equity-vs-salary-how-to-split-ownership-with-your-first-teammate

11. Why Joining an Early-Stage Startup is Better Than a Corporate Job

🔗 https://findnstart.com/blogs/why-joining-an-early-stage-startup-is-better-than-a-corporate-job

12. The Future of EdTech: Why Developers and Educators Need to Team Up Now

🔗 https://findnstart.com/blogs/the-future-of-edtech-why-developers-and-educators-need-to-team-up-now

13. The Architecture of Symbiosis: Analytical Perspectives on the Five Habits of Successful Startup Duos

14. Finding a Co-Founder in the AI Space: What Skills Should You Look For?

🔗 https://findnstart.com/blogs/finding-a-co-founder-in-the-ai-space-what-skills-should-you-look-for

15. Overcoming Analysis Paralysis and the Strategic Path to Execution

🔗 https://findnstart.com/blogs/overcoming-analysis-paralysis-and-the-strategic-path-to-execution

16. From College Project to Company: How to Find Your Student Co-Founder

🔗 https://findnstart.com/blogs/from-college-project-to-company-how-to-find-your-student-co-founder

17. How to Start a Startup While Working a Full-Time Job

🔗 https://findnstart.com/blogs/how-to-start-a-startup-while-working-a-full-time-job

18. How to Build a HealthTech Startup Without a Medical Degree

🔗 https://findnstart.com/blogs/how-to-build-a-healthtech-startup-without-a-medical-degree

19. The Solitary Architect: Executive Isolation in Entrepreneurship

20. The 2026 Guide to Launching a SaaS as a Solo Developer

21. What Sustainable Growth Actually Looks Like

🔗 https://findnstart.com/blogs/what-sustainable-growth-actually-looks-like

22. The Early Warning Signs Your Startup Is in Trouble

🔗 https://findnstart.com/blogs/the-early-warning-signs-your-startup-is-in-trouble

23. How to Grow Without Burning Out

🔗 https://findnstart.com/blogs/how-to-grow-without-burning-out

24. The Truth About “Runway” Most Founders Ignore

🔗 https://findnstart.com/blogs/the-truth-about-runway-most-founders-ignore

25. Revenue Solves More Problems Than Funding

🔗 https://findnstart.com/blogs/revenue-solves-more-problems-than-funding

26. What No One Tells You About Being a Solo Founder

🔗 https://findnstart.com/blogs/what-no-one-tells-you-about-being-a-solo-founder

27. Why Smart People Quit High-Paying Jobs to Build Startups (And Why Most Regret It)

28. Why Most Startup Advice on Twitter Is Dangerous

🔗 https://findnstart.com/blogs/why-most-startup-advice-on-twitter-is-dangerous

29. Decision Fatigue: The Silent Startup Killer

🔗 https://findnstart.com/blogs/decision-fatigue-the-silent-startup-killer

30. Fear vs Logic: How Founders Actually Make Decisions

🔗 https://findnstart.com/blogs/fear-vs-logic-how-founders-actually-make-decisions

31. How Overthinking Destroys Early Momentum

🔗 https://findnstart.com/blogs/how-overthinking-destroys-early-momentum

32. Ideas Don’t Scale. Systems Do.

🔗 https://findnstart.com/blogs/ideas-dont-scale-systems-do

33. The First Hire That Actually Matters

🔗 https://findnstart.com/blogs/the-first-hire-that-actually-matters

34. How the First 100 Users Decide Your Startup’s Fate

🔗 https://findnstart.com/blogs/how-the-first-100-users-decide-your-startups-fate

35. Why Your Startup Doesn’t Need Growth — It Needs Focus

🔗 https://findnstart.com/blogs/why-your-startup-doesnt-need-growthit-needs-focus

36. Why Most Startups Die Quietly

🔗 https://findnstart.com/blogs/why-most-startups-die-quietly

37. Lessons Learned Too Late by First-Time Founders

🔗 https://findnstart.com/blogs/lessons-learned-too-late-by-first-time-founders

38. The Myth of the “Overnight Success” Startup

🔗 https://findnstart.com/blogs/the-myth-of-the-overnight-success-startup